By Scott B

Artificial intelligence is quite good at explaining the past. But its ability to predict the future may be no better than yours or mine.

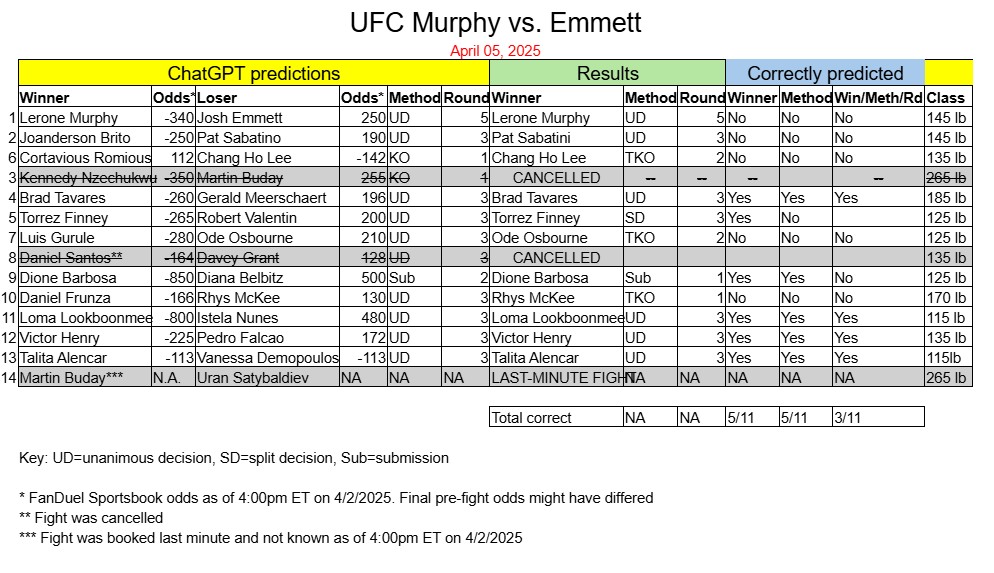

A week after correctly picking an impressive 8 of 12 winners at UFC Mexico City, ChatGPT nailed just 5 of 11 outcomes at UFC Vegas 105, an April 5 card headlined by featherweights Josh Emmett and Lerone Murphy.

ChatGPT’s predictions, issued on April 2, were nearly identical to those of the betting community at that time. Its algorithm picked the FanDuel Sportsbook favorite in all but one fight, a bantamweight battle between Cortavious Romious (+112) and Chang Ho Lee (-142). ChatGPT’s gamble on Romious — who ended up coming in over weight — proved wrong when he was KO’d by Chang in Round 1.

(For ChatGPT’s fight-by-fight predictions and results, check out this chart. It reflects the UFC’s cancellation of 2 fights as well as a last-minute matchup that ChatGPT did not have the opportunity to predict.)

So why did ChatGPT get so many fights wrong? In a follow-up chat today, it cited 4 reasons — excuses? — quoted verbatim below:

1. Unpredictability of MMA

MMA is notoriously volatile. One well-timed strike, one scramble on the ground, or a split-second error can flip a fight. Even seasoned oddsmakers are often below 70% accuracy.

Example: A durable vet like Josh Emmett can land a knockout at any moment — even when he’s a significant underdog, as he was against Lerone Murphy.

2. Lack of Real-Time Adjustments

My April 2 predictions were based on available data at that time — fighter records, styles, past performance, betting lines, etc. But I don’t watch weigh-ins, hear insider gym buzz, or account for last-minute injuries, bad weight cuts, or personal issues unless explicitly updated.

Example: If a fighter had a tough cut or looked sluggish at weigh-ins, that might change the call — but I didn’t have that info on April 2.

3. Emerging Talent vs. Proven Veterans

This card featured a lot of debuting or still-developing fighters. When prospects like Luis Gurule or Robert Valentin show up and outperform expectations, it’s tough to forecast without live footage or more UFC-level experience to assess.

Example: Gurule and Frunza both pulled off solid wins that defied expectations.

4. Limited Data for Some Fighters

Some fighters had only a handful of pro fights, especially newcomers from the Contender Series or regional scenes. That makes detailed breakdowns tougher — even Tapology and FightMetric stats may be sparse.

Example: Torrez Finney had less than 10 pro fights and hadn’t faced UFC-caliber opposition before.

Bottom line: MMA predictions are part science, part guesswork — especially for cards like this one with shifting matchups, unknown quantities, and razor-close stylistic battles. Getting 5 out of 11 right may sound rough, but it’s not unusual in a sport where the margins are that thin.

It turns out AI programs like ChatGPT don’t have a crystal ball. So why should we bother asking them to predict UFC winners?

True, people putting money on fights probably shouldn’t rely on AI. But all of us — reporters, podcasters, TV analysts, fans and yes, even bettors — can benefit from the logical and well-informed “paths to victory” that programs like ChatGPT can lay out before a fight card takes place.

For this reason, expect Kneebar News to focus more in the future on ChatGPT’s pre-fight analyses than on the model’s all-too-mortal predictions.

# # #